Robotics has officially moved out of the factory corner and into everyday life. What started as machines doing simple, repetitive work has expanded into real-world, human-facing services. Walk into a store and a robot recognizes you and says hello. AI-enhanced CCTV scans a space in real time and flags hazards. That’s not sci-fi anymore—that’s Tuesday.

Hyundai Motor Group’s Robotics Lab is working around the clock to make robots feel less like “tech” and more like part of the environment. The key building blocks include Facey (its facial-recognition platform), an on-device VLM (Vision Language Model), Intelligent CCTV, and NARCHON, the Lab’s robot command-and-control system. On the hardware side, DAL-e and DAL-e Delivery continue to evolve as the Lab folds in new software capabilities. We sat down with the researchers behind these systems to learn how they’re being engineered—and where they’re headed.

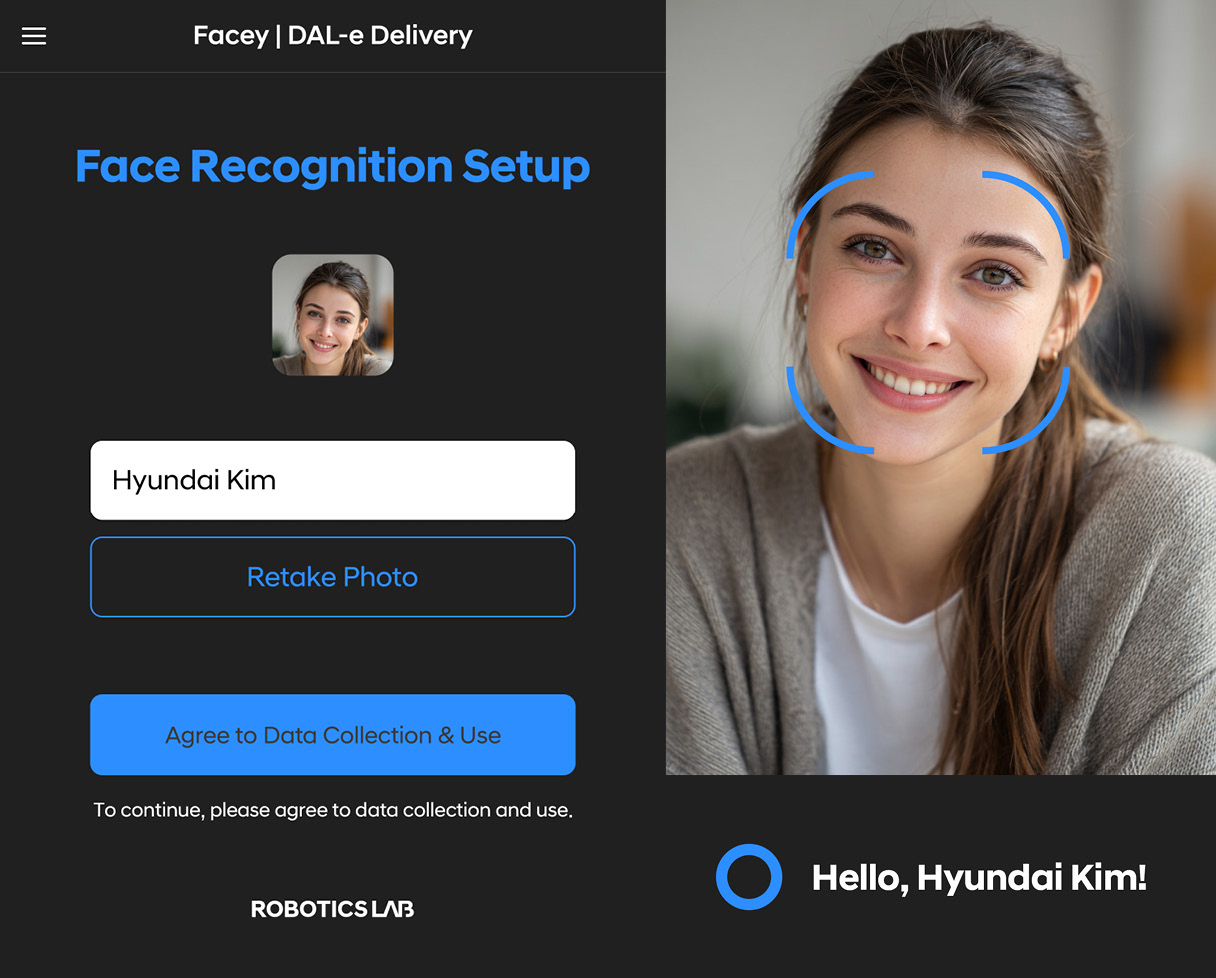

Q. Please explain the Facey facial-recognition system.

Part Leader Moon-sub Jin | Facey is a facial-recognition system that connects robots with the infrastructure in the spaces where they operate. With a single registration, all Robotics Lab robots can recognize a user and provide friendlier, more convenient service. To build trust, we obtained biometric certification from the Korea Internet & Security Agency (KISA), and we’re currently validating our capabilities by entering the facial-recognition challenge run by the U.S. National Institute of Standards and Technology (NIST). Facey also aims to go beyond identity verification, using facial information to infer age, gender, emotion, and gaze so it can deliver services tailored to each user.

Q. Facial recognition is already widespread. Smartphones that use special cameras to read facial contours are mainstream. How does Facey differ from existing tech?

Part Leader Moon-sub Jin | 3D-scan methods deliver high accuracy on personal devices, but their recognition distance is short for robots or buildings. Facey was engineered to achieve high accuracy with standard 2D cameras by building massive facial-image datasets and developing AI models optimized for real-world operating environments. As a result, we produced a model with greater than 99.9% accuracy.

Q. A face is sensitive personal data. What protections are in place?

Part Leader Moon-sub Jin | We treat facial data like it’s mission-critical—because it is. Communications and stored data are encrypted end-to-end, and we run a tight security program with internal reviews that include penetration testing. On top of that, we’ve built camera-only anti-spoofing to spot fakes, and we use IR (infrared) cameras so a photo or a screen can’t pass as a live person.

Introduction to HMG Robotics Lab’s facial-recognition technology

Q. The tech is already deployed at Factorial Seongsu. Any pain points in the real world?

Part Leader Moon-sub Jin | So far, no red flags. We haven’t heard about meaningful inconveniences on site, and user surveys at Hyundai Motor’s Gangnam office came back strongly positive. People especially liked the “register once, use everywhere” aspect—because the same facial profile can power access control at gates and user verification for delivery robots.

Q. How might Facey show up in everyday life—or even mobility?

Part Leader Moon-sub Jin | Contactless facial recognition has gone mainstream since COVID-19, and the Genesis GV60 already lets you unlock the doors with your face. We see in-home and in-building robot services naturally tying into identity through facial recognition. Beyond simple access control, think recipient verification for delivery robots, identifying persons of interest for security robots, and even face-based payments. The use cases are there—and they scale.

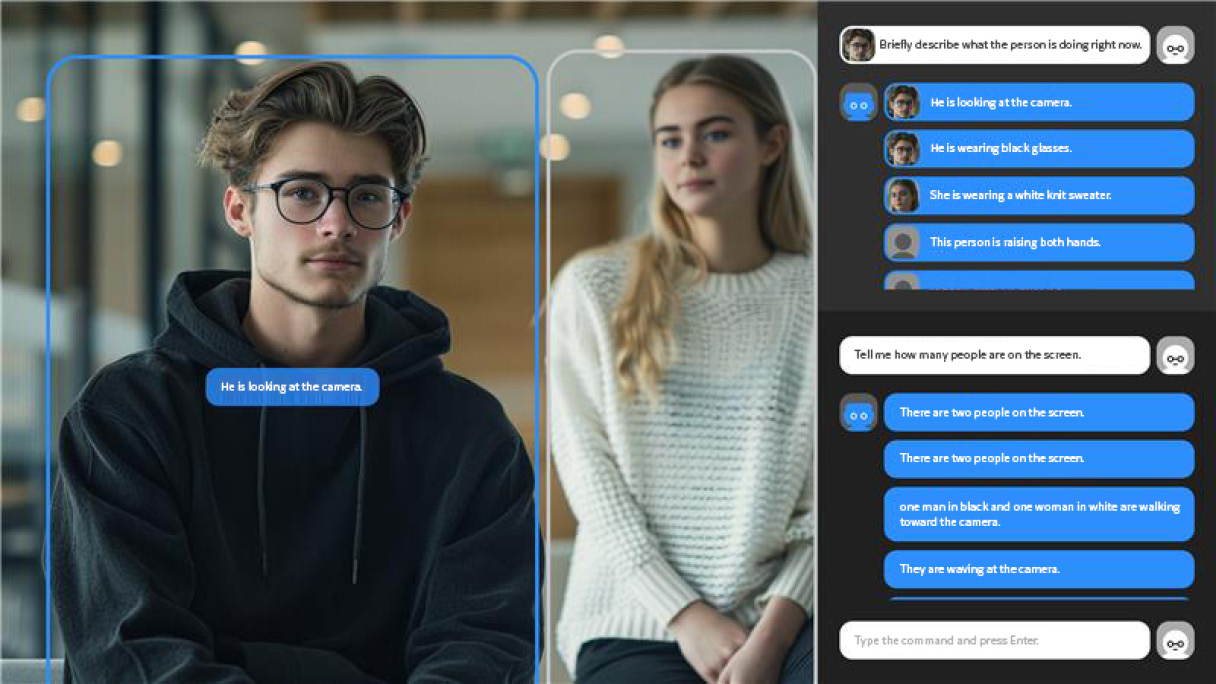

Q. What is a VLM, and what makes the Robotics Lab’s version different?

Part Leader Moon-sub Jin | A Vision Language Model (VLM) turns what a camera sees into language—describing and understanding visual information the way a person might. While LLMs like ChatGPT typically live in the cloud, our VLM is tuned to each robot’s job and runs on-device, meaning locally inside the robot with no network connection required.

Q. Running that on-device sounds heavy. How did you make it work?

Part Leader Moon-sub Jin | We used model-lightweighting and optimization to fit real on-device constraints. Specifically, we applied pruning to remove less important parts of the model and reduce size, and quantization to represent weights with fewer bits to cut compute. The payoff is strong vision capability without relying on high-end cloud infrastructure—lowering costs and improving privacy by keeping data off external networks.

Q. How broad is the VLM’s des-criptive range?

Part Leader Moon-sub Jin | It depends on the robot’s mission. On DAL-e, our guide robot, the VLM can identify clothing color or whether someone’s holding a coffee—small details that help it approach people more naturally. It doesn’t just read attire; it can recognize behaviors, surroundings, and the context of the entire camera view.

Q. Where does this show up in real service?

Part Leader Moon-sub Jin | The DAL-e at Hyundai’s Gangnam office already uses it for greetings—recognizing clothing and items in hand to create a more personable welcome. Beyond robots, Intelligent CCTV can leverage the same capability to flag anomalies such as fires, fights, or jaywalking. And as robot roles diversify, the applications expand with them.

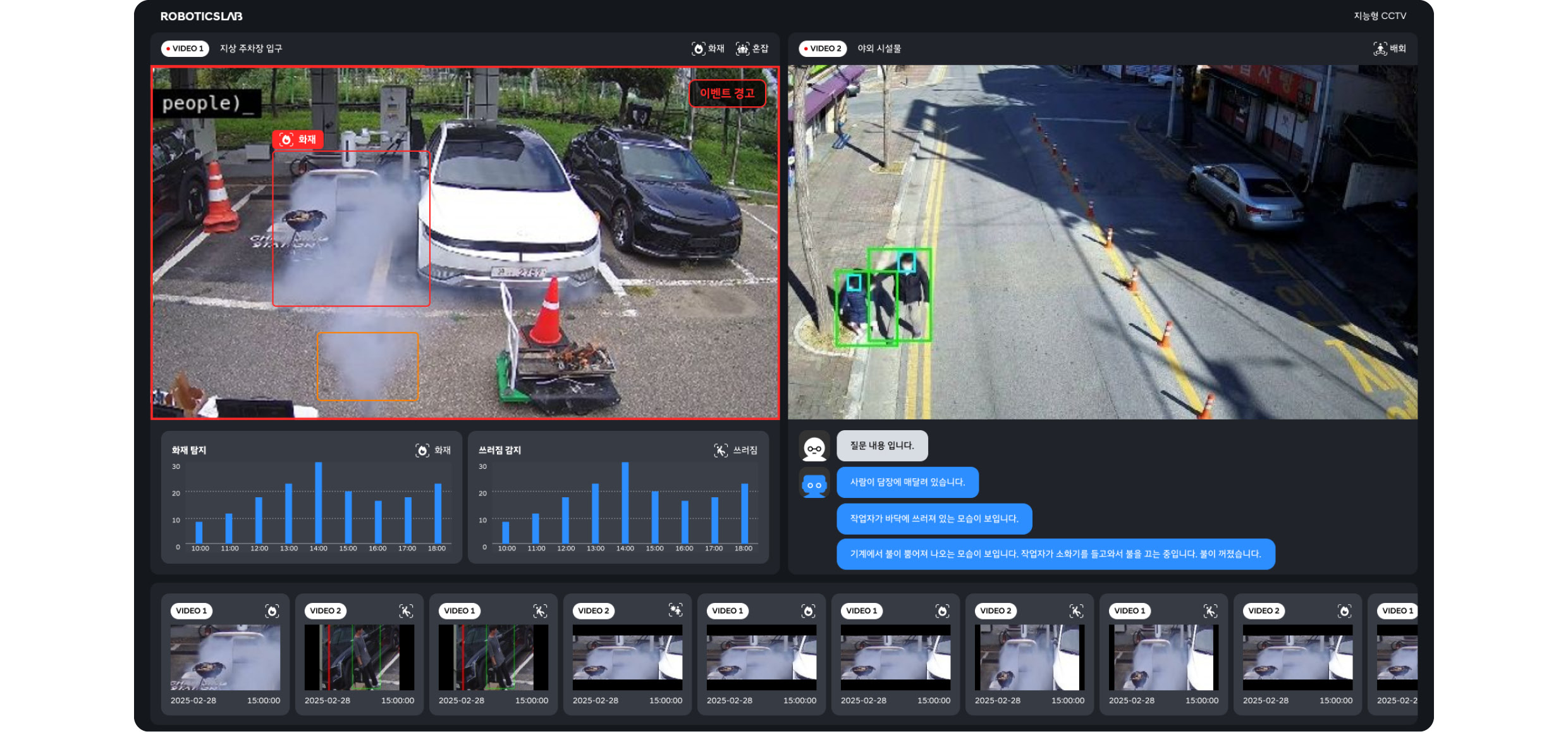

Q. What separates Intelligent CCTV from conventional systems?

Senior Research Engineer Yong-sang Yoon | Traditional CCTV is passive—it watches and records. Our Intelligent CCTV is proactive. It acts like a digital sentry, using AI video analytics to actively manage safety. For example, conventional systems often need the roadway pre-mapped to detect jaywalking. Intelligent CCTV can self-segment roadway versus sidewalk and determine whether a pedestrian is jaywalking on its own. It can also detect someone collapsing unconscious, vandalism, or fighting—and it can link with Robotics Lab robots to respond in real time.

Q. How accurate is anomaly detection right now?

Senior Research Engineer Yong-sang Yoon | Using baselines built from datasets collected by KISA and the NIA AI Hub (National Information Society Agency), our internal evaluation shows 98.4% accuracy for intrusion, loitering, and emergency-patient detection. KISA certification testing is underway. Working with our lightweight VLM, we’re also validating more complex scenes like fighting and vandalism. At present, we can detect about seven anomaly types total, including overcrowding (excessive clustering in a defined zone) and jaywalking.

Q. Once it detects something, what happens next?

Senior Research Engineer Yong-sang Yoon | Detection is only step one. Through our in-house API, we can call linked robots to run response scenarios. If an intruder is detected, we can dispatch a robot to the scene and use Facey to verify whether the person is authorized—enabling coordinated, real-time action. On the flip side, if a customer enters, we can dispatch a concierge robot to greet them. So it’s valuable for service flows, not just emergencies.

Q. Is deeper integration with other Robotics Lab tech on the roadmap??

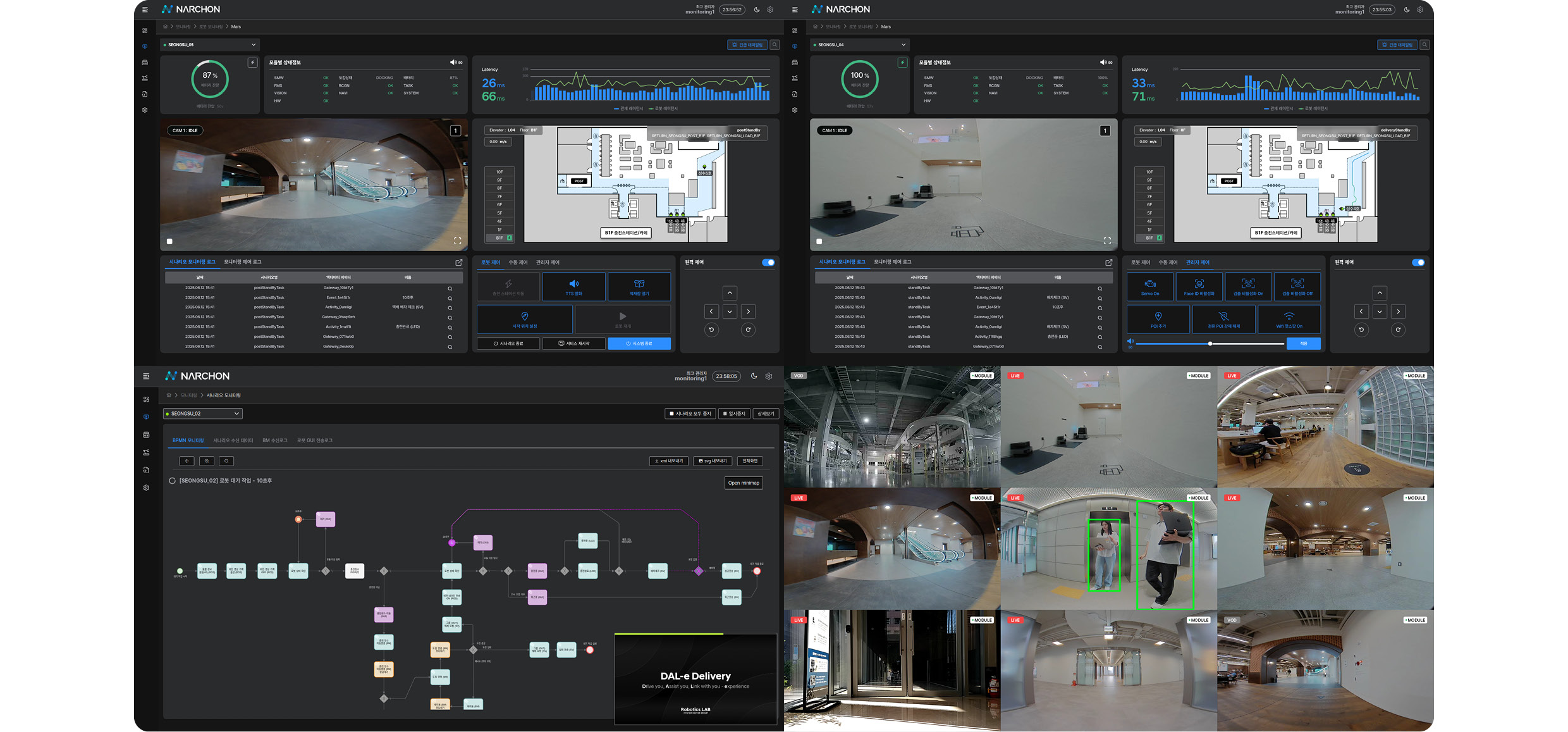

Senior Research Engineer Yong-sang Yoon |Definitely. Intelligent CCTV becomes even more powerful when paired with NARCHON, our control system for commanding robots. Today we support 12 channels of simultaneous event detection; with performance upgrades and optimization, we’re targeting 64 and 128 channels—building a safety-management solution for smart cities, smart buildings, public spaces, and industrial sites.

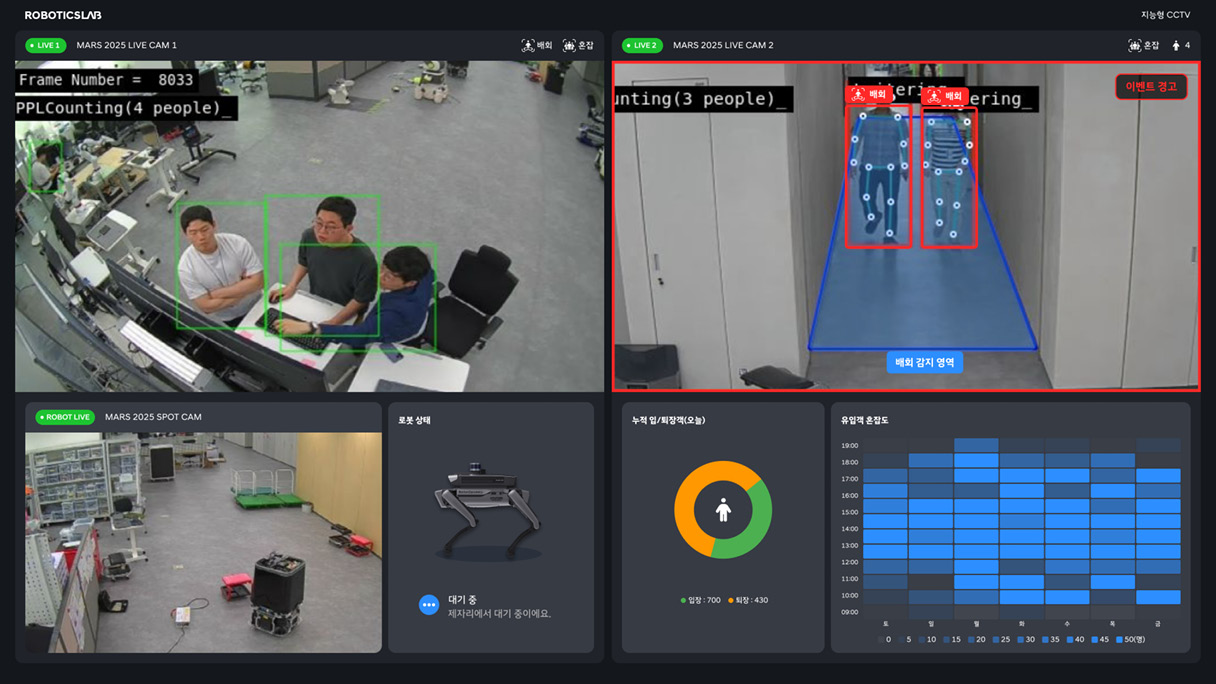

Q. NARCHON isn’t a familiar name—what does it mean?

Part Leader Jung-min Ryu | NARCHON is short for “Next Architecture ON,” our next-generation robot command system. It reflects our goal of setting a new benchmark for integrated control in environments like smart factories, smart buildings, and smart cities.

NARCHON operates like a central nervous system, connecting robots with infrastructure and orchestrating everything as one ecosystem. It can pre-open doors or call elevators so a robot flows through its route—think “Hi-Pass” for robot missions—and it picks optimal paths by tracking congestion in specific zones and monitoring other robots’ positions inside a building.

Q. When you’re controlling lots of robots, doesn’t the whole system become fragile if the network gets flaky?

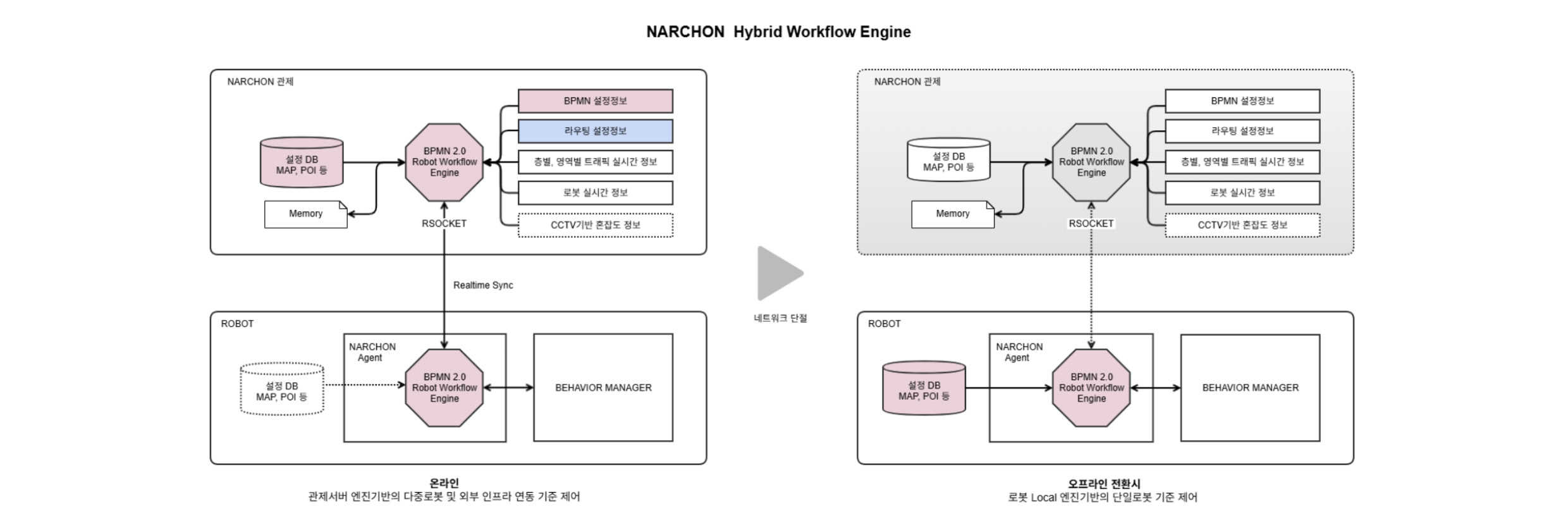

Part Leader Jung-min Ryu | That’s exactly why we built a homegrown BPMN 2.0-based hybrid workflow engine. It runs on both the robots and the control system, so even if connectivity drops, robots can keep executing scenarios autonomously. For example, an office robot may move to a safe waiting point or hug a wall while it attempts reconnection. On a factory floor, it can resume the last task automatically. When communications recover, everything re-syncs and continues.

Q. Is it deployed anywhere yet?

Part Leader Jung-min Ryu | An early version is running at HMGICS (HMG Singapore Global Innovation Center), and the full release is deployed at Factorial Seongsu. We’ve integrated a wide range of infrastructure and services there—ordering systems, elevators, gate linkage, F&B delivery, parcel delivery—and stabilized operations with congestion and floor-traffic management plus non-stop gate-pass functionality. Next step is expansion across other HMG plants and offices.

Q. Was Intelligent CCTV integration always part of the plan?

Part Leader Jung-min Ryu | Yes. We’ve already run joint trials, including projects with the quadruped robot SPOT. In one scenario, when Intelligent CCTV detects an intruder, NARCHON dispatches a robot to issue a warning and sends an a-lert to the control room for human response. And in crowded interiors, we don’t rely solely on onboard lidar—Intelligent CCTV headcounts can decide whether the robot should enter a space in the first place.

Q. What is the Robotics Lab’s autonomous payload for SPOT?

Part Leader Tae-ho Kim | It’s an in-house payload that enables Boston Dynamics’ SPOT to operate autonomously. Now in its third generation, it integrates our Vision-AI module, autonomous-drive control, intelligent task control, and speech-output control—powered entirely by Robotics Lab software. Through our task-control framework, a minimal network command can trigger execution, and SPOT handles vision, speech AI, autonomy, and hardware control on-device, aligned with the mission scenario.

Q. What improved versus the previous payload?

Part Leader Tae-ho Kim | We cut weight from 14 kg to ~8 kg—roughly a 40% reduction. Even with the weight loss, it’s now waterproof for outdoor use, and we ad-ded magnetic covers for easier disassembly and field repairs. We also integrated a 5G modem for more robust communications and installed an RF tag at the top. During security patrols, if SPOT finds someone in a restricted area, it can send an a-lert with a photo to the control system—and if that person doesn’t tag an ID at the top reader, the incident is logged.

Q. On-device face recognition plus autonomy sounds like a tough integration problem. What’s the real challenge?

Part Leader Tae-ho Kim | Building each function isn’t the hard part—the hard part is integrating and orchestrating everything into a service that customers genuinely value.

Part Leader Moon-sub Jin | And we’re not stopping at face recognition. We ad-ded security-AI features, and in total four or more AI models run on the payload. To run them in real time on-device, we built a GPU-based parallel inference pipeline.

Q. Please introduce DAL-e and DAL-e Delivery.

Part Leader Ji-yong Jin | DAL-e is a service robot built on the Robotics Lab’s first Plug-and-Drive (PnD) module. It uses 2D lidar and cameras and has provided guidance services across multiple locations, starting with the Hyundai Songpa-daero branch. DAL-e Delivery is an indoor, point-to-point delivery platform based on DAL-e’s PnD module, equipped with two 3D lidars and four cameras. Both robots deliver guidance and delivery services using in-house autonomous-driving technology built around their sensor suites.

Q. A lot of guide robots are basically rolling screens. DAL-e looks genuinely charming—why?

Part Leader Jia Lee | We designed DAL-e not as a moving kiosk “product,” but as a warm, friendly presence for visitors and employees. Along with a cute exterior and soft finish, we gave it the voice and cadence of a 10-year-old and built in natural interaction—facial expressions, arm movements, eye contact—so conversations feel more human.

Q. It recognizes faces, reads emotions, dodges obstacles, and chats. How do you make all that work as one system?

Part Leader Eui-Hyeok Lee | DAL-e is essentially a rolling integration demo of Robotics Lab technologies. Using our controller and camera system, it estimates age range and gender, infers emotion from facial expressions, and recognizes employees and registered customers. For autonomous driving, its camera and lidar stack detect obstacles and reroute using our algorithms. Those sensor inputs feed the dialogue system so it can trigger the right facial expression, greeting, or “please make way” response in context.

Q. How advanced is DAL-e’s dialogue system today?

Part Leader Eui-Hyeok Lee | Early on, the dialogue was simple—more conditional than conversational. But as LLM-based AI has advanced, dialogue capability has leapt forward. We now fuse multiple sensor inputs to build a multimodal dialogue system that coordinates not just speech but varied reactions. It doesn’t answer the same way every time—it adapts to context—and we’re developing speech synthesis that adjusts voice and intonation based on the user’s emotion and the situation.

Q. Functionally, how does DAL-e Delivery differ from DAL-e?

Part Leader Ji-yong Jin | DAL-e is designed to guide people; DAL-e Delivery is built to bring food and drinks directly to the destination. DAL-e is an omnidirectional indoor PnD platform optimized for engagement in tighter spaces. DAL-e Delivery adds suspension to handle uneven floors and elevator thresholds—and it keeps spill-prone cargo stable while moving.

Q. How capable is DAL-e Delivery’s autonomous driving?

Part Leader Ji-yong Jin | DAL-e uses 2D lidar; DAL-e Delivery upgrades to 3D lidars plus cameras. Through sensor fusion, it recognizes moving obstacles, predicts their motion, and generates routes in real time. We tuned motion profiles to avoid harsh acceleration and braking, so travel is smoother and more stable. With our high-reliability in-house SLAM and navigation, it can autonomously operate across large indoor and outdoor spaces.

Q. What’s unique about DAL-e Delivery’s design versus DAL-e?

Part Leader Jia Lee | We reduced exposed sensors and lowered the center of gravity for stability, giving it a refined, premium look that separates it from typical delivery bots. A sliding cargo bay and lighting improve usability and perceived quality at handoff. From the ordering app to the robot UI, we designed a seamless journey from summon to delivery.

Q. Where is DAL-e Delivery headed next?

Part Leader Ji-yong Jin | In 2022, we successfully piloted doorstep food delivery in an apartment complex in Gwanggyo. Today it’s fully capable of long-range outdoor delivery. The goal is to evolve it into a general-purpose delivery platform—so autonomous delivery becomes something anyone can deploy easily.

HMG’s Robotics Lab is keeping the pace relentless—developing robotics that don’t just impress on paper, but slot cleanly into real life. Its software-driven approach is what turns robots into an ecosystem, bringing the “robot-intelligence society” into reality across smart cities, smart factories, and public spaces. This is engineering aimed squarely at everyday value—and if the trajectory holds, the payoff will be measured in safer spaces, smoother service, and a better day-to-day experience.

Photography by Hyuk-soo Cho